ASL Assist Smart-Home Controller

Hackathon Project | UHackathon – University of Washington Tacoma

Duration

24 hours (Hackathon)

Team Size

4 people

Tools Used

Azure Custom Vision, JavaScript, Python, Webcam API, Smart-Home APIs

Project Overview

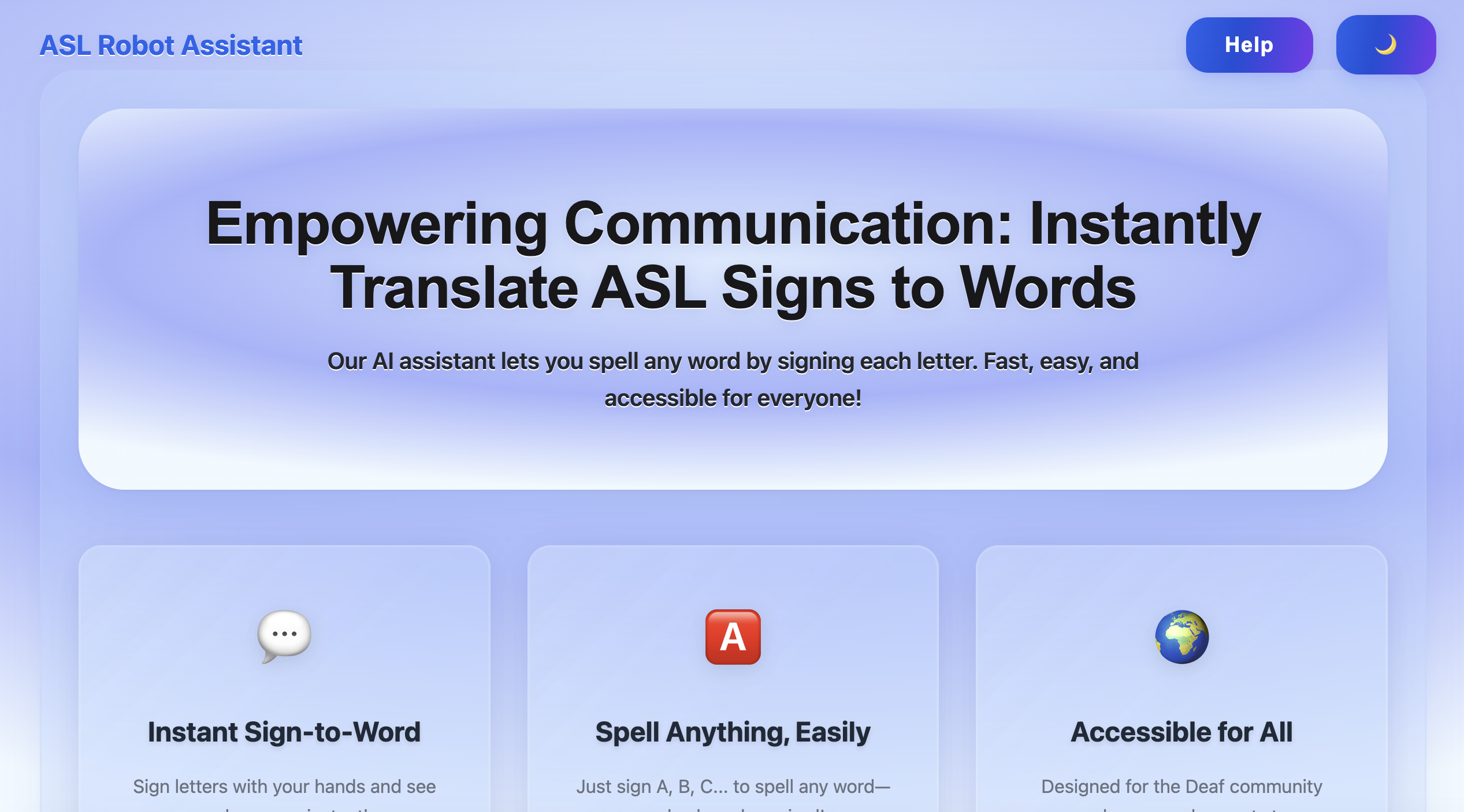

This hackathon project at UHackathon – University of Washington Tacoma focused on creating an innovative smart-home controller that uses American Sign Language (ASL) gestures to control home automation systems. The goal was to develop an accessible interface that allows users to control their smart home devices through ASL hand gestures.

Technical Implementation

Integrated real-time gesture interface tied a webcam feed to our Azure Custom Vision model and routed its output (A-Z ASL letters) through JavaScript to invoke Python services and smart-home APIs.

Key Features

- Real-time ASL gesture recognition using webcam feed

- Azure Custom Vision model integration for A-Z letter detection

- JavaScript-based gesture processing and routing

- Python services for smart-home API integration

- Responsive and accessible user interface

- Smart-home device control through ASL gestures

- Real-time feedback and gesture confirmation

Technology Stack

- Azure Custom Vision for machine learning

- JavaScript for frontend processing

- Python for backend services

- Webcam integration for real-time gesture capture

- Smart-home APIs for device control

- Responsive web interface

Impact

This project demonstrated the potential of combining computer vision, machine learning, and accessibility design to create innovative smart-home solutions. It showcased how ASL can be used as a natural interface for controlling technology, making smart homes more accessible to the deaf and hard-of-hearing community.